Orchestrating Trust: Building Reliable Data Systems for Social Impact

How production-grade orchestration enables impact at scale

Systems built for social impact are often judged by their intent. The assumption is that good outcomes naturally follow from good goals, and that technical sophistication is secondary to mission. In practice, the opposite is often true. When information systems fail, the cost is not measured in lost revenue or delayed insights, but in missed opportunities for help, support, or timely intervention.

At scale, social impact is not a matter of aspiration. It is a matter of reliability.

Platforms that serve vulnerable populations operate under constraints that are both technical and ethical. Information must be accurate, current, and accessible. It must adapt as the world changes. It must withstand uneven demand and tolerate partial failure without collapsing. Most importantly, it must continue operating without constant human supervision, because manual intervention does not scale to moments of urgency.

These requirements are familiar to anyone who has built systems for finance, logistics, or large-scale commerce. What differs is the margin for error. In social contexts, latency is not an inconvenience. Inconsistency is not an annoyance. Failure is not an abstract metric. The system either delivers trustworthy information when it is needed, or it does not.

This is where data architecture quietly becomes social infrastructure.

The hidden fragility of well-intentioned systems

Many social platforms begin with pragmatic solutions. Data is collected from disparate sources, normalized through custom pipelines, and exposed through simple interfaces. Early success reinforces the approach: the system works, organizations adopt it, and impact grows.

Over time, however, complexity accumulates. Data sources evolve independently. Update cycles diverge. Quality varies across contributors. What once felt manageable starts to strain under its own assumptions.

In building social data platforms, we learned that fragility rarely appears all at once. It emerges gradually. Pipelines grow longer. Reprocessing becomes broader than necessary. Validation shifts from design to manual oversight. Eventually, the system still functions but confidence in its outputs begins to erode.

When correctness depends on human vigilance, availability depends on institutional memory. When updates become opaque, trust shifts away from architecture toward individual heroics. For systems intended to support people under real-world pressure, this is an unsustainable state.

The problem is not a lack of data or compute. It is a lack of structural guarantees.

From pipelines to obligations

Traditional data pipelines are designed around execution. They define a sequence of tasks that transform inputs into outputs. This model assumes that intermediate states are transient and that value resides primarily at the end of the flow.

In social data systems, this assumption does not hold.

Normalized datasets, enriched resources, derived aggregates-these are not disposable by-products. They are durable artefacts with meaning beyond a single run. They are reused, audited, compared over time, and relied upon by downstream organizations making decisions under uncertainty.

Once data outputs are treated as obligations rather than by-products, the role of orchestration changes fundamentally. The system’s responsibility is no longer to run jobs, but to ensure that specific states of data exist, remain current, and remain explainable.

This distinction matters because obligations persist. They require guarantees: freshness, lineage, and reproducibility. They require the system to know what it has produced, what it depends on, and what must change when assumptions shift.

In this framing, orchestration stops being an operational convenience and becomes a form of governance.

Reliability under real-world constraints

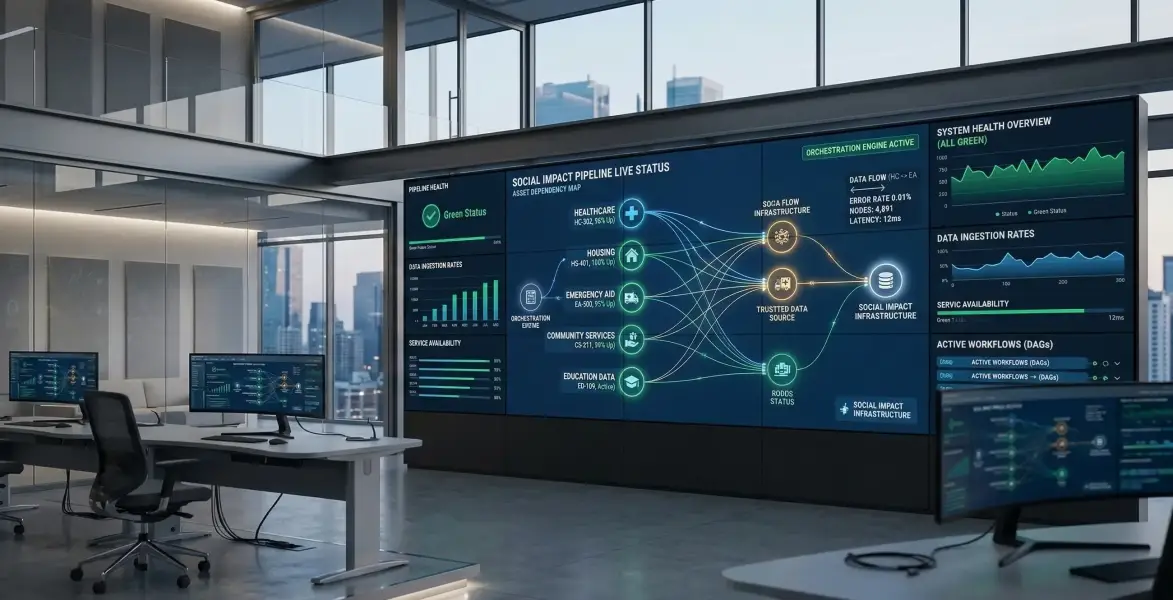

These principles became tangible while building Connect211, a modern search platform designed to support 211 organizations operating across multiple U.S. states. The platform aggregates resource data from independent organizations, each maintaining its own systems, taxonomies, and update rhythms.

What we learned early is that reliability in such an environment cannot be retrofitted. Data sources change independently. Failures are localized. Demand is uneven and often event-driven. Manual coordination quickly becomes the bottleneck.

Meeting these constraints required treating data artefacts as first-class citizens. Each normalized dataset, each enrichment step, each derived index represents a commitment: this information must exist, must be correct, and must be traceable back to its origins.

Asset-oriented orchestration provided a natural way to express these commitments. Instead of reasoning about execution order, the system reasons about data state. Instead of pushing data through pipelines, it ensures that required artefacts are materialized and kept current as upstream conditions change.

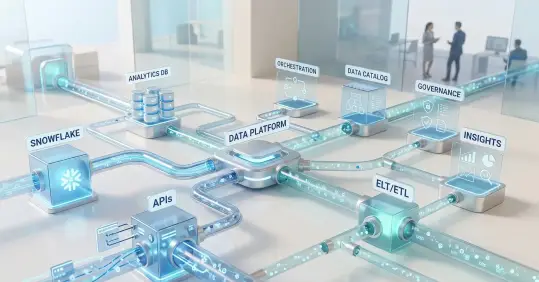

Dagster’s asset-based model aligned closely with this way of thinking. It allowed us to encode not only how data is processed, but what must be true for the system to be considered healthy. Orchestration became a mechanism for maintaining trust rather than merely coordinating tasks.

Automation without opacity

Automation is often presented as a universal solution to scale. In social systems, automation without structure can be as dangerous as manual fragility. When updates propagate automatically but their causes remain hidden, errors scale just as efficiently as value.

What distinguishes resilient systems is not the absence of automation, but the presence of clarity. Asset-based orchestration preserves the narrative of the data. Every artefact carries its provenance. Every update has a reason. When stakeholders ask why information changed, the answer is embedded in the structure of the system itself.

In environments where information influences real-world outcomes, this explainability underpins legitimacy. Trust is not established through assurances, but through the ability to demonstrate correctness when it matters.

Automation, in this sense, is not about removing humans from the loop. It is about ensuring that when humans intervene, they do so with understanding rather than guesswork.

Social impact as an emergent property

It is tempting to frame social impact in terms of outcomes alone. Did the platform help more people? Did it improve access? Did it reduce friction?

These questions are essential, but they are downstream. They describe effects, not causes.

At scale, social impact emerges from systems that behave predictably under stress. From platforms that continue operating as inputs change unexpectedly. From architectures that degrade gracefully rather than fail catastrophically. From data systems that are transparent by design rather than opaque by accident.

The same principles that govern production-grade platforms in commercial domains apply here, but with heightened stakes. Reliability is not an optimization. It is the foundation upon which impact rests.

In this light, orchestration is not a technical detail. It is part of the social contract embedded in the system-defining how obligations are met, how failures are contained, and how trust is maintained over time.

Beyond mission-driven engineering

There is a persistent tendency to treat social platforms as exceptional-worthy of different standards because their goals are noble. In practice, this often leads to underinvestment in architecture, justified by urgency or limited resources.

Our experience suggests the opposite conclusion. When tolerance for error is low and consequences are real, architectural rigor becomes more important, not less. Production-grade data systems are not at odds with social missions. They are prerequisites for sustaining them.

Asset-based orchestration, exemplified by tools like Dagster, provides a framework for expressing this rigor. It shifts focus from execution to responsibility, from pipelines to promises. It allows systems to scale not only in size, but in trustworthiness.

Social impact does not arise from technology alone. But without reliable systems, even the strongest intentions struggle to translate into lasting effect. When data platforms are designed as social infrastructure, reliability ceases to be a purely technical concern and becomes a public good.

FAQ: Production-Grade Orchestration for Social Impact Systems

What is asset-based orchestration in data engineering?

Asset-based orchestration is an architectural approach where data artefacts (datasets, models, indexes, aggregates) are treated as first-class citizens rather than by-products of pipeline runs.

Instead of defining execution steps, you define data states that must exist and their dependencies. The orchestration system ensures:

- Correct dependency resolution

- Incremental recomputation

- Freshness guarantees

- Lineage tracking

- Failure isolation

This shifts orchestration from task coordination to state governance.

How is asset-based orchestration different from traditional pipelines?

Traditional pipelines are execution-oriented:

- Step A → Step B → Step C

- Outputs are transient

- Reprocessing is often coarse-grained

Asset-based systems are state-oriented:

- Explicit dependency graphs

- Selective re-materialization

- Persistent artefacts with lineage

- Declarative data contracts

The key difference is that pipelines answer:

“What runs next?”

Asset-based orchestration answers:

“What must be true about the data?”

For systems operating under strict reliability constraints, that distinction is critical.

Why does data lineage matter in social impact systems?

In social systems, incorrect data can influence:

- Access to services

- Emergency response decisions

- Resource allocation

- Regulatory reporting

Lineage provides:

- Auditability

- Explainability

- Reproducibility

- Impact traceability

When stakeholders ask, “Why did this information change?”, lineage allows engineering teams to answer with certainty, not speculation.

What architectural risks do social data platforms typically face?

Common failure modes include:

- Silent schema drift from independent data providers

- Broad, expensive reprocessing triggered by minor upstream changes

- Manual validation becoming a hidden operational dependency

- Lack of observability into partial failures

- Tight coupling between ingestion and serving layers

Without structural guarantees, reliability degrades gradually — often without obvious alerts.

How does orchestration contribute to data governance?

Orchestration becomes governance when it encodes:

- Explicit data ownership boundaries

- Dependency contracts

- Freshness expectations

- Failure domains

- Version-aware updates

Rather than governance being a policy document, it becomes embedded in the system’s execution model.

This reduces reliance on institutional memory and tribal knowledge.

Is asset-based orchestration only relevant at large scale?

No. It becomes more visible at scale, but its benefits appear earlier:

- Faster iteration cycles

- Safer refactoring

- More predictable deployments

- Lower operational overhead

- Clearer system reasoning

For mission-critical domains, reliability requirements often emerge before traffic scale does.

How does this relate to data observability and reliability engineering?

Asset-based orchestration complements:

- Data observability (freshness, volume, schema monitoring)

- Data reliability engineering practices

- SLA/SLO enforcement

- Incident response workflows

Because dependencies are explicit, blast radius and impact analysis become tractable. Observability signals can be tied directly to defined data obligations.

What role does automation play in maintaining trust?

Automation enables scale, but trust requires:

- Transparency

- Traceability

- Deterministic recomputation

- Controlled failure propagation

Well-structured orchestration ensures that automation is explainable, not opaque. Errors do not silently cascade across the system.

When should a team consider moving from pipelines to asset-oriented architecture?

Signals include:

- Increasing reprocessing cost

- Growing dependency complexity

- Difficulty explaining data changes

- Manual intervention becoming routine

- Rising stakeholder sensitivity to correctness

If correctness is non-negotiable, state-aware orchestration becomes a strategic investment rather than an optimization.

About the authors / context

This article is based on our direct experience building production-grade data infrastructure for https://connect211.com, a modern search platform supporting 211 organizations across multiple U.S. states. The insights presented reflect real architectural decisions made while scaling a social impact system operating under strict reliability and data-quality constraints.